Naive me in 2015: Thinking WebRTC is just another browser API.

Experienced me after more than five years and dozens of WebRTC applications: WebRTC based applications are different from other web applications with a unique set of challenges. But also, solutions, if you have a little experience behind you.

In this post, I will review some of the things I wish I had known back then.

But first, some WebRTC basics. WebRTC is:

- an open source project

- a real time communications framework

- secure

- updated frequently

- used in many major platforms and applications today

- available on all modern browsers

But, many issues exist that can cause such user responses as:

- “The app doesn’t work on my iPhone.”

- “Hi. Hello? Can you hear me? I can hear you, but you can’t hear me.”

- “Yes, I can see you, but your video is very blurry.”

- “My microphone is not working.”

- “I can’t connect. I think my Internet must be too slow.”

My goal in this post is to give you some insights on the problems inherent to WebRTC, as well the solutions that myself and our team at WebRTC.ventures have used in the past.

Some of the ways WebRTC is particularly different than a basic web application are:

- Interoperability

- Scalability

- Networking

- Security

- Testing

- Debugging/Troubleshooting

Let’s take them one at a time.

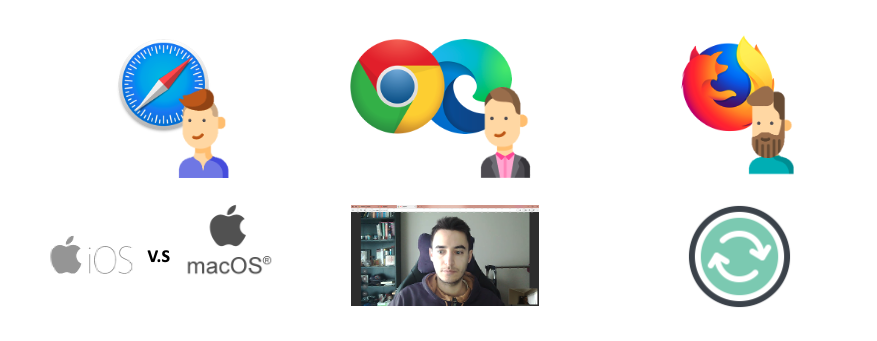

Interoperability

WebRTC was originally built for 1-1 communication. When used in this straightforward way. there should be no major issues in how devices interact with each other. Still, problems can arise.

Problems:

- Cameras and microphones are not equal in each device

- Advanced features are not supported in some browsers and devices

- Browsers – some known issues with Chrome, Edge, Firefox, and Safari

- Safari has the most challenges – particularly iOS. Some features like screenshare you cannot use.

Solutions:

- User notifications are essential! The user needs to know what is going on or you lose all credibility.

- Advance browser detection and again, notification

- Logging issues using the WebRTC statistics API

- Debug issues using tools like WebRTC Internals or Wireshark

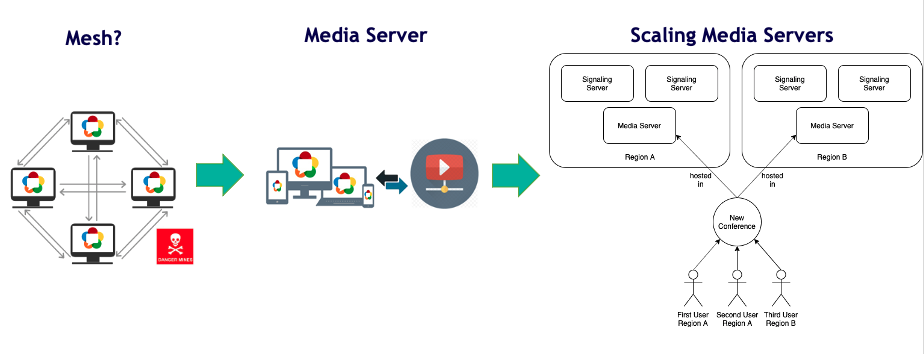

Scalability

Mesh video architecture would be the approach my naive self would have taken to building a WebRTC application. Connecting all the users together and sending audio and video between them seems a logical way to go. But, WebRTC does not scale well if used in this way.

Problem:

- If you want to have more users, you will encounter CPU and bandwidth issues

Solution:

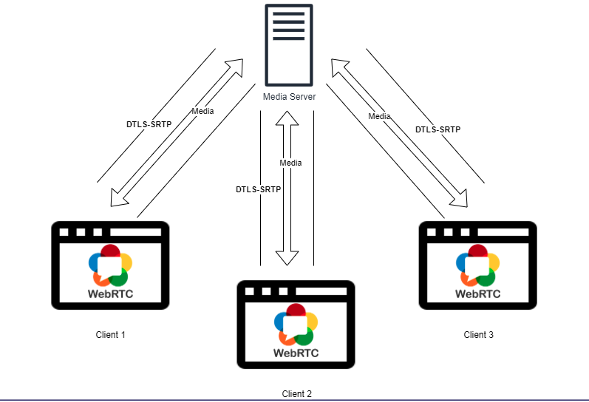

- Use a Media Server to distribute the video to the participants

- This will also allow more advanced features like integration with other technologies and processing video streams

Problem:

- Once we solved the problem of participants’ scalability with a media server, there is a limit on how powerful a media server can be. At some point, you need more than one server. So, what if you have a whole lot of participants? Perhaps over 1,000?

Solution:

- Scaling Media Servers. A typical approach here is to use a dispatcher that will distribute the calls. This can be done randomly, but a better practice is to use geographical cascading. This means that the video is distributed based on where you are located. For example, if we are both in the U.S., we would both be directed to a media server in the U.S.

To solve these scalability issues, there are both open source and commercial solutions.

Some of the common commercial options that we have used at WebRTC.ventures are listed below. They can scale up to one million participants, depending on the use case.

Open Source options we also use or have used:

Networking Issues: Restrictive Networks

Because WebRTC uses UDP (User Datagram Protocol), it has more problems gaining access to restricted networks than typical web applications.

Problems:

- Proxy and firewall rules

- Proxy authentication from clients required?

- Proxy blocking access to IP addresses

- Use of Network address translation (NAT) that hides an IP address from the public internet

Solution:

- Keep and continually review your checklist of requirements

- Monitor the issues, keep logs, and notify

- Traverse NATs using a TURN server

Networking Issues: Congestion

Similar to previous, there are technical issues that can lead to network congestion.

Problems:

- Too many hosts in a local network

- Low bandwidth

- Interference from outside sources or faulty cabling in headphones or other user devices.

Solution:

- WebRTC has error resilience mechanisms built in. However, there is a limit if, for example, you really do have low bandwidth. Optimizing video bandwidths will be the focus of a future blog post!

- For these other issues, advance detection, monitoring, logging, and notifications are key

WebRTC Security

WebRTC by design is secure and encrypted, but only for the ideal of 1:1. When there is a media server, the video needs to be decoded and the security can be compromised. This is common in other technologies, as well, with Zoom being the most recent focus of controversy. I went into more detail on WebRTC security and privacy issues in my blog post, WebRTC Security Overview in 2020. Google Security Engineer Natalie Silvanovich was also Arin Sime’s guest on WebRTC Live last November.

Solution:

A new solution has emerged called E2EME or End-to-End Media Encryption. E2EME uses Insertable Streams API to use secure frames mechanism. Kudos to CoSMo software and Google for developing this. It, too, will be the focus of a future blog post.

Web Testing

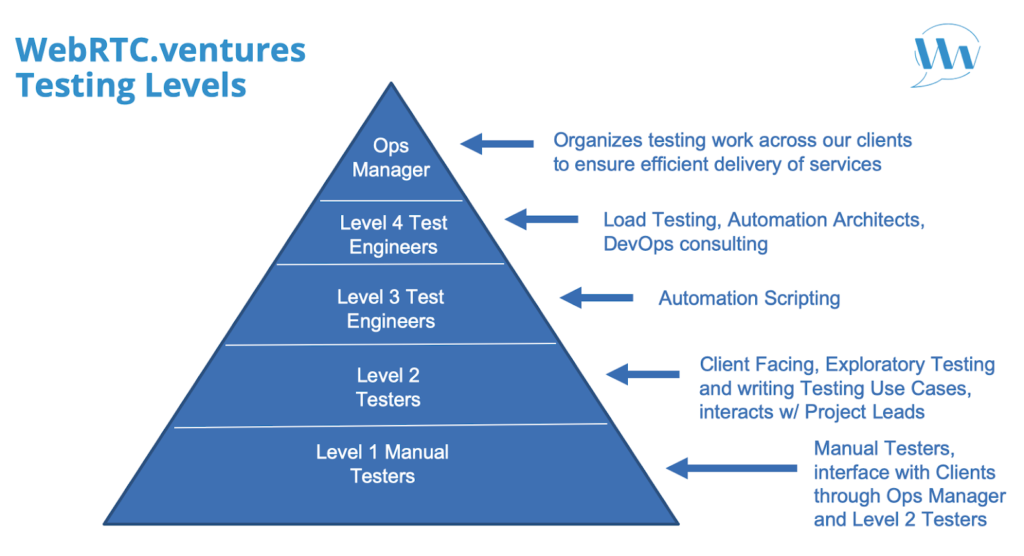

WebRTC testing is not as simple as regular web testing. Although we will need to do functional testing and unit testing, testing WebRTC apps has an additional set of problems.

Problems:

- Compatibility testing does not equal interoperability testing. There is one additional degree of complexity by having more than one user connecting with other users.

- Performance testing require us to use N browsers concurrently

Good news here! WebRTC.ventures is making our extensive testing expertise available to those outside of our regular development clients. We have formalized our testing, which is now available as a standalone service.

Back to the user issues

Now that we have covered some of the common problems with WebRTC, let’s go back to the user issues I outlined earlier in this post and see if we can guess what could be the problem.

- “The app doesn’t work on my iPhone,” said someone using Chrome on iPhone. They will not be able to successfully handle a WebRTC call. This is something we could detect in advance using a pre-call test. Whatever the case, we must make sure the user receives a notification.

- “Hi. Hello? Can you hear me? I can hear you, but you can’t hear me,” said someone failing to accept or blocking access to the microphone. This can be a UI issue.

- “Yes, I can see you, but your video is very blurry,” said someone using a video app that uses older codecs or doesn’t use SVC or simulcast.

- “My microphone is not working,” said someone with faulty headphones.

- “I can’t connect. I think my Internet must be too slow,” said someone about an app that doesn’t prioritize audio and optimize available bandwidth. Could we make our app more flexible to use just audio or can we optimize their bandwidth?

Apps today have a high standard. Users expect them to work–always. And in today’s remote work environment, they need to work–always! Although WebRTC is a newer technology, we need to achieve a high level of reliability. As I hoped I have shown here, it is not always so easy!

If you are considering building an WebRTC app, or you need to test an existing one, you need to turn to a team with deep experience. You can find that at WebRTC.ventures. Contact us today!